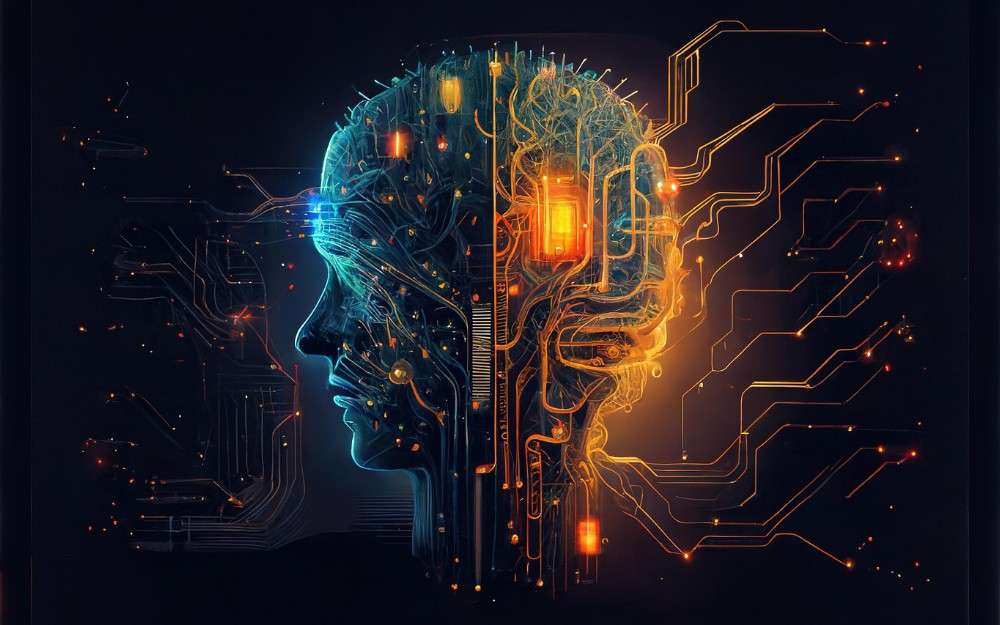

A deep learning algorithm that has been trained on enormous datasets to read, summarize, translate, forecast, and create content is called a Large Language Model (LLM).

Based on the patterns and structures they learned from a large amount of training data, these models are intended to comprehend and generate text that is similar to that of humans.

LLMs in AI such as OpenAI’s GPT-4, Google’s Gemini, Meta’s LLaMA, and Anthropic’s Claude excels in a variety of applications because they have been trained on a wide range of data and have been performance-optimized using techniques like pretraining and adjustment.

The use of LLMs by businesses to help with customer service, troubleshooting, and having open-ended conversations in response to user-provided prompts is one of the most popular uses for LLMs.

Chatbots, virtual assistants, and code generating platforms are examples of tools that leverage LLMs.LLMs are vulnerable to a variety of cyber threats because of their capacity to process and generate vast amounts of sensitive data, which makes security in these systems essential.

According to experts, in order to maintain systems compliant and reduce the risk of LLM data leakage, strong data privacy LLM practices include filtering outputs, limiting access, and protecting training inputs.

Large language models in cybersecurity have the potential to be very successful in thwarting contemporary threats. ChatGPT, Gemini, Claude, and other tools excel at interpreting delicate context. Like humans, they are able to correlate alerts with the knowledge of a professional analyst, evaluate logs as though they were reading a tale, and even condense complicated situations.