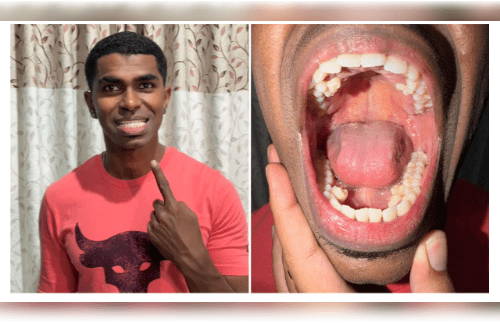

Reviewing, screening, and managing user-generated information on social media platforms is known as social media content moderation.

Social media content moderation is becoming more and more important for social media platforms to safeguard users for potential misuse.

With the use of content moderation services like image and video moderation, such content on internet platforms can be managed. They are closely checked by experts who are known as content moderators or in case of social media; they’re called social media moderator who checks them and determines whether to allow such stuff or remove it.

AI content moderation searches, detects and eliminates content that violates the rules or guidelines of a social media platform, website or other platforms.

There are some tools like Microsoft Azure AI content safety detects harmful user-generated and AI-generated content in applications and services. It offers text and image APIs that allow users to detect harmful or inappropriate content.

The moderations endpoint of the OpenAI platform is used to find out whether any text or images could be harmful. If harmful content is detected, users can take corrective action, like filtering content or intervening with user accounts authoring problematic content.

Checkstep AI content moderation platform integrating cutting-edge AI and automation with human monitoring detect content of interest faster, set and enforce regulations.

Amazon Rekognition Content Moderation automates and streamlines user’s image and video moderation operations using machine learning (ML).

Google Cloud’s AI provides powerful machine learning models that can carry out complex image and video analysis. These models can identify and classify visual content based on predetermined criteria, ensuring that only relevant content is shown.

Hive Moderation’s New Vision Language Model that processes images and text inputs, returning plain-language and structured JSON answers.